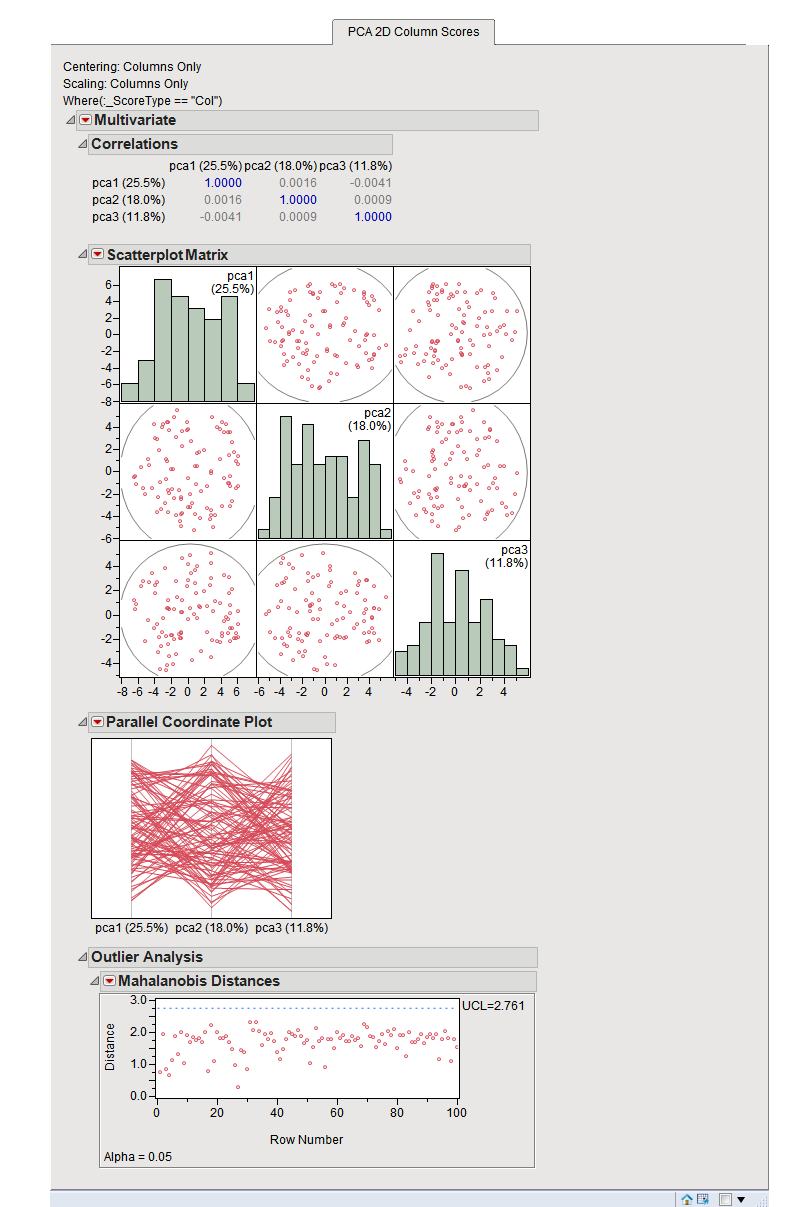

It’s simple to see that the covariance matrix is a square matrix of order num_features. If the features are not all zero mean initially, we can subtract the mean of column i from each entry in that column and compute the covariance matrix. Here, X.T is the transpose of the matrix X. If the features are all zero mean, then the covariance matrix is given by X.T X. Suppose the data matrix X is of dimensions num_observations x num_features, we perform eigenvalue decomposition on the covariance matrix of X. So how do we find the principal components? And PCA is essentially a projection of the dataset onto the principal components. These directions are called principal components. So it's important to find the directions of maximum variance in the dataset. The motivation behind the algorithm is that there are certain features that capture a large percentage of variance in the original dataset.

Meaning it reduces the dimensionality of the feature space.

How Does Principal Component Analysis (PCA) Work?īefore we go ahead and implement principal component analysis (PCA) in scikit-learn, it’s helpful to understand how PCA works.Īs mentioned, principal component analysis is a dimensionality reduction algorithm.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed